Sobre

O meu nome é Ana Maria Mendonça e sou Professora Associada do Departamento de Engenharia Eletrotécnica (DEEC) da Faculdade de Engenharia da Universidade do Porto (FEUP). Foi nesta Universidade que concluí o meu doutoramento em 1994. Fui investigadora do Instituto de Engenharia Biomédica (INEB) até 2014, mas a partir de 2015 integrei o Centro de Investigação em Engenharia Biomédica em do INESC TEC como investigadora sénior.

Na minha atividade de gestão de ensino superior e investigação, fui membro do Conselho Executivo do DEEC e, mais recentemente, Subdiretora da FEUP. No INEB, integrei a Direção do Instituto inicialmente como vogal e, posteriormente, como Presidente da Direção.

Fui membro eleito do Conselho Científico da FEUP e sou atualmente membro do Conselho Pedagógico desta escola. Integrei as comissões científicas de vários ciclos de estudo da FEUP e sou atualment Diretora da Licenciatura e do Mestrado em BioEngenharia, do Mestrado em Engenharia Biomédica e do Programa Doutoral em Engenharia Biomédica da FEUP.

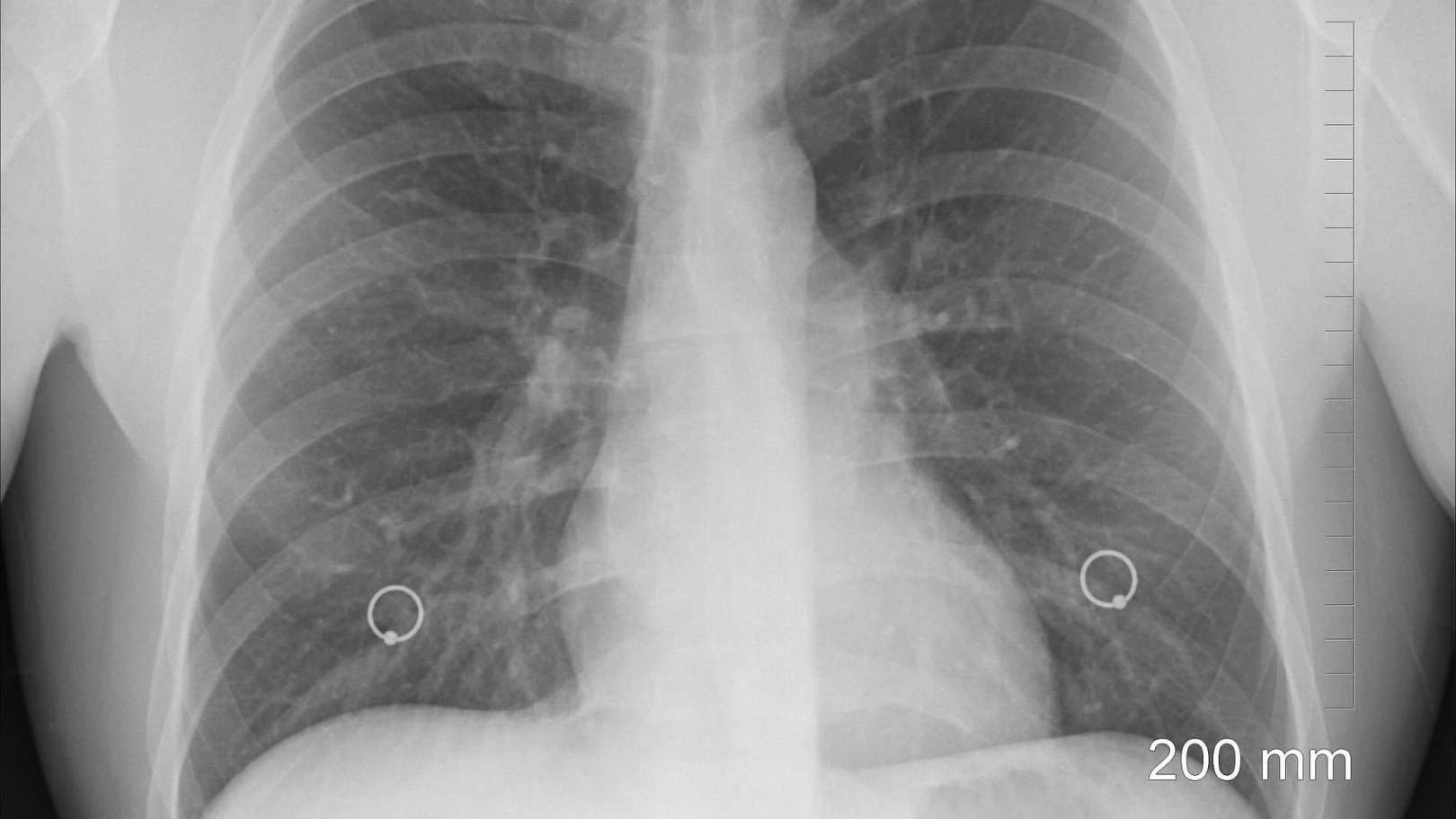

Tenho colaborado como investigadora ou como responsável em diversos projetos de investigação, principalmente na área da imagem biomédica. O meu trabalho de investigação centrou-se essencialmente no desenvolvimento de metodologias de análise de imagem e classificação tendo como objetivo a extração de informação útil de imagens médicas para apoiar o diagnóstico médico. O trabalho passado foi dedicado essencialmente às patologias da retina, do pulmão e doenças genéticas, mas o trabalho atual está essencialmente focado no desenvolvimento de sistema de apoio ao diagnóstico em oftalmologia e radiologia.